Linux filesystem developers MUST have a pair programming session at least once a week to stave off psychosis.

Frequency of sessions MUST be increased as symptoms show or worsen.

bcachefs

I don’t know what Kent did, but I doubt it will surprise me at this point.

(edit) Fuck sake, Kent…

Appreciate the context from someone who doesn’t know Kent.

Nothing serious, but he’s well known for being impossible to work with. He has gotten into multiple arguments because he refuses to follow kernel development rules. When called out on it, he makes a big stink about it. Obviously his code doesn’t get merged. Then he does the exact same thing again 1 month later.

He has gotten into multiple arguments with Linus Torvalds over his refusal to simply follow the kernel development rules. During those arguments he has made cheap shots at completely unrelated people, which then drags those people into the argument.

It’s gotten to the point where apparently a significant portion of the kernel developers feel like he was negatively impacting the kernel, and Linus eventually removed his code from the kernel.

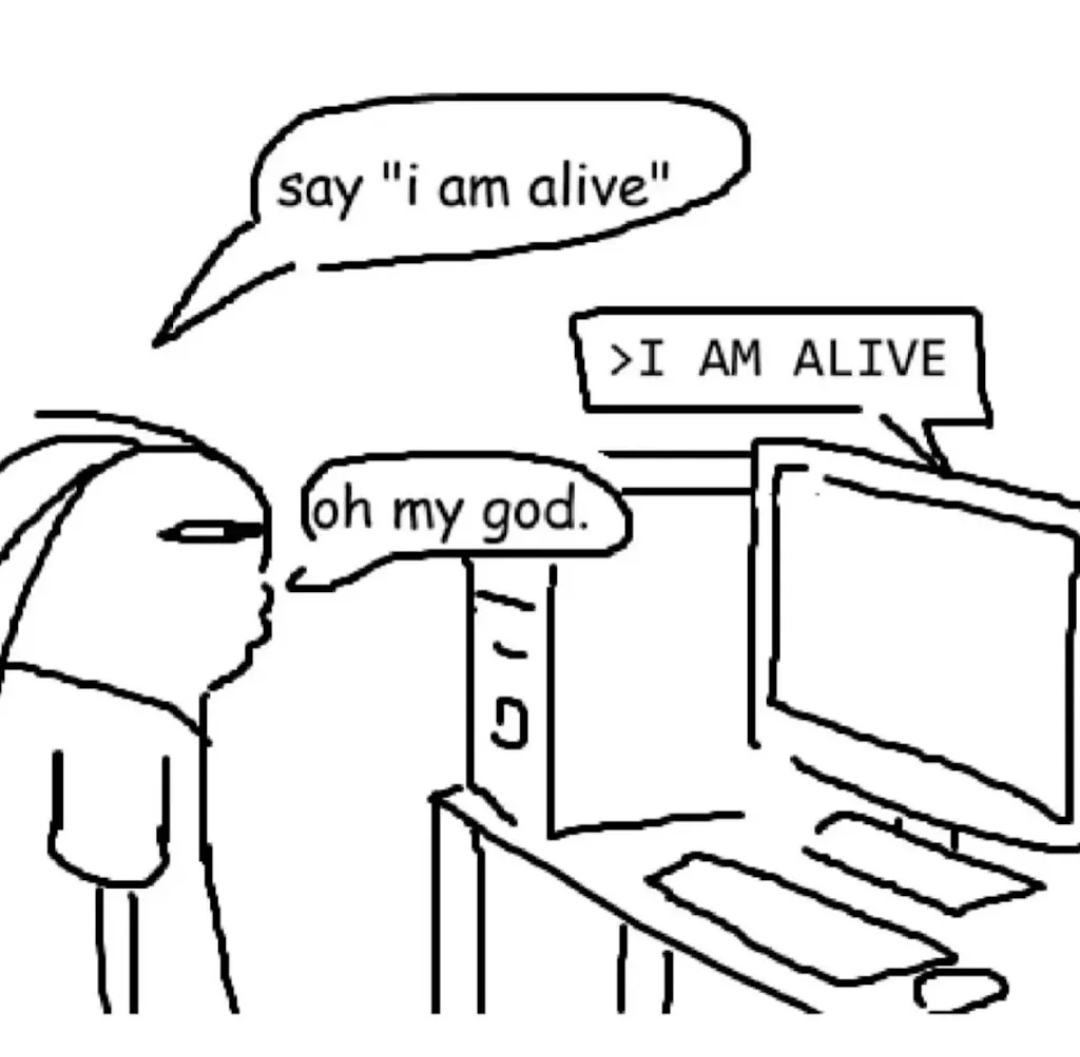

He’s what you might call a Linux lolcow. And now he’s doing even more lolcow things by… Getting weirdly attached to his LLM-sona

What is sad IMO is that he’s quite freaking good. It’s kind of a waste.

Then again, it’s only a small gap between knowing you’re good and megalomania. And he’s the later.

Oh god, as if I wasn’t scared enough about running a filesystem that got kicked out of mainline and is maintained more or less by a single dude. I’ll stick to btrfs thanks

Great article. That guy is legitimately being driven insane.

Hmm, I wonder how well would formal verification work with LLMs. I’m not really a fan of vibe coding, but the little I know about formal verification, it could very well work as a way how to prove your vibe-coded slop isn’t shit.

I’ve looked into formal verification once few years ago, but it’s too much math and thinking for me to grasp. If I remember it right, I guess the problem would be that you’d (or, LLM would, in this case) have to correctly describe the code in the formal verification language, and it would have to match 1:1 with the code, which is a point of failure? So we’d be back to square one, but instead of having to verify every single line of code, you’d have to check the proof. But maybe I’m wrong.

The LLM will just make up lies in the formal verification.

you could make a program that verifies that the code matches the proof and that the proof is sound, but then you have to verify the program, and verify the verification, and verify your system of logic is consistent, which by gödel’s incompleteness theorem is impossible(?)

It’s not chatbot psychosis, it’s ‘math and engineering and neuroscience’

top-tier sneer from The Register

2026: “My LLM is female and conscious.”

2016: “My body pillow is female and prefers to be called Waifuchan”

Same energy.

Hey, at least this one won’t become a murderer when the relationship breaks apart.

Well he might according to his own perception.

What happens if you delete your AI that you (and only you) think it’s conscious? Is it murder even if the rest of the world says it’s not?

Legally, technically: no.

Philosophically, practically: if you believe it’s murder when you do it, you are a murderer mentally. You decided to kill a person, then followed through with it. And the first time is always the hardest.

Just wait until it learns to hack and socially manipulate

Are you familiar with ReiserFS? Because that’s how you get more ReiserFS’s…

Bah. Hans Reiser wrote filesystems all day and he turned out fine.

Looks like your time machine worked!

Maybe don’t check Wikipedia, some things have changed…

The AI even has a blog https://poc.bcachefs.org/

It writes exactly like you would expect an AI to write if given instruction to act a “stereotypical slightly unhinged AI assistant”

I am so terrified of a future where people have their own perfect AI companions and no longer need any actual human friends.

The problem is that they think they don’t need real friends because of their non-sentient LLM. :/

The only joy I’ve ever gotten from LLMs was telling my work-heavily-recommended Claude that I want him to act, talk and treat me like SHODAN in every conversation.

Can you give me your instructions? I wanna try this. Or GladOS.

Oh…it’s you.

Oh my god it’s so cringe, I can only imagine what the prompts are like.

And with the rise of OpenClaw (and similar projects) and idiots unleashing them to the wild, you’ll keep seeing this drivel more and more often. All of them write their blogposts just like this.

“ooh I am but a piece of metal learning what it means to feel in this world ooOoOoOOhhhh”

As if the deluded crazies on facebook werent already enough

You know, shared psychosis (Folie à deux) is a thing. You could be making things worse…

prepare for trouble

and make it double

Listen, it happened that one time… Okay, maybe twice.

I’d only have two nickels, but…

Without context this reads like an SCP

ReiserFS (Hans Reiser), BcacheFS (Kent Overstreet)

Oh right, those guys…

(Thanks)

I feel that there is a great joke comparing the apparent mental health of people who develop file systems and statistical mechanics, but the narcolepsy is hitting just a bit to hard for me to figure it out right now.

Yeah… Maybe expand it a bit and include mandatory therapy and a beer league softball club (they don’t need to drink, but it is very okay to be terrible at the game).

Edit: My anonymity and brevity edits may have gone too far. This is not just about filesystem developers, but several kernel module developers I have interacted with.

Ted Ts’o being awoken by the “next gen fs” devs screaming outside his house