Excerpt:

“Even within the coding, it’s not working well,” said Smiley. “I’ll give you an example. Code can look right and pass the unit tests and still be wrong. The way you measure that is typically in benchmark tests. So a lot of these companies haven’t engaged in a proper feedback loop to see what the impact of AI coding is on the outcomes they care about. Lines of code, number of [pull requests], these are liabilities. These are not measures of engineering excellence.”

Measures of engineering excellence, said Smiley, include metrics like deployment frequency, lead time to production, change failure rate, mean time to restore, and incident severity. And we need a new set of metrics, he insists, to measure how AI affects engineering performance.

“We don’t know what those are yet,” he said.

One metric that might be helpful, he said, is measuring tokens burned to get to an approved pull request – a formally accepted change in software. That’s the kind of thing that needs to be assessed to determine whether AI helps an organization’s engineering practice.

To underscore the consequences of not having that kind of data, Smiley pointed to a recent attempt to rewrite SQLite in Rust using AI.

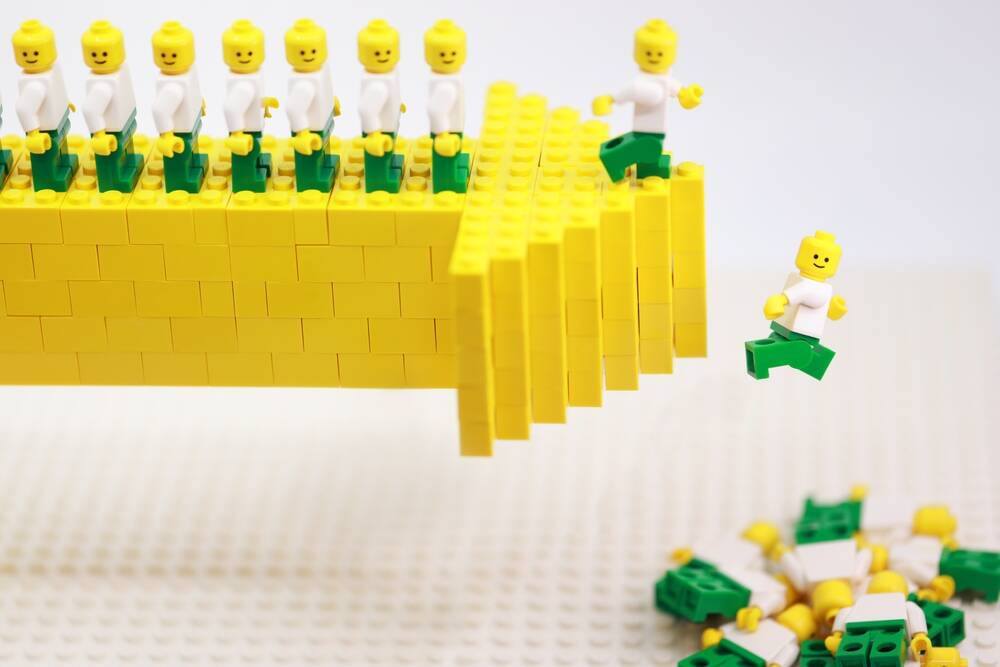

“It passed all the unit tests, the shape of the code looks right,” he said. It’s 3.7x more lines of code that performs 2,000 times worse than the actual SQLite. Two thousand times worse for a database is a non-viable product. It’s a dumpster fire. Throw it away. All that money you spent on it is worthless."

All the optimism about using AI for coding, Smiley argues, comes from measuring the wrong things.

“Coding works if you measure lines of code and pull requests,” he said. “Coding does not work if you measure quality and team performance. There’s no evidence to suggest that that’s moving in a positive direction.”

The “ceiling” is the fact that no matter how fast AI can write code, it still needs to be reviewed by humans. Even if it passes the tests.

As much as everyone thinks they can take the human review step out of the process with testing, AI still fucks up enough that it’s a bad idea. We’ll be in this state until actually intelligent AI comes along. Some evolution of machine learning beyond LLMs.

We just need another billion parameters bro. Surely if we just gave the LLMs another billion parameters it would solve the problem…

One smoldering Earth later….

I realized the fundamental limitation of the current generation of AI: it’s not afraid of fucking up. The fear of losing your job is a powerful source of motivation to actually get things right the first time.

And this isn’t meant to glorify toxic working environments or anything like that; even in the most open and collaborative team that never tries to place blame on anyone, in general, no one likes fucking up.

So you double check your work, you try to be reasonably confident in your answers, and you make sure your code actually does what it’s supposed to do. You take responsibility for your work, maybe even take pride in it.

Even now we’re still having to lean on that, but we’re putting all the responsibility and blame on the shoulders of the gatekeeper, not the creator. We’re shooting a gun at a bulletproof vest and going “look, it’s completely safe!”

I just feel good when things I make are good so I try to make them good. Fear is a terrible motivator for quality

In my experience, around 50% of (professional) developers do not take pride in their work, nor do they care.

I agree. And in my experience, that 50% have been the quickest and most eager to add LLMs to their workflow.

And when they do, the quality of their code goes up

I agree we’re better off firing them, but I’m not their manager and I do appreciate stuff with less memory leaks and SQL injections

The amount of their output goes up. More importantly, they excrete code faster than good developers equipped with AI, simply because they don’t bother to review generated code. So now they are seen as top performers instead of always lagging behind like it was before AI.

Whether it actually results in better code is debatable, especially in the long run.

Yep. The methodology of LLMs is effectively an evolution of Markov chains. If someone hadn’t recently change the definition of AI to include “the illusion of intelligence” we wouldn’t be calling this AI. It’s just algorithmic with a few extra steps to try keep the algorithm on-topic.

These types.of things, we have all the time in generative algorithms. I think LLMs being more publicly seen is why someone started calling it AI now.

So we’ve basically hit the ceiling straight out of the gate and progress is not quicker or slower. We’ll have another step forward in predictive algorithms in the future, but not now. It’s usually a once a decade thing and varies in advancement.

Edit: I have to point out that I initially had hope that this current iteration of “genAI” would be a very useful tool in advancing us to actual AI faster, but, no. It seems the issues of “hallucination”—which are a built-in unavoidable issue with predictive algorithms trained on unfiltered mass—is not very capable. The university I work at, we’ve been trying different things for the past two years, and so far there seems to be no hope. However, genAI is good at summarising mass outputs of our normal AI, which can produce a lot to comb through, but anything the genAI interpretats still needs double-checked despite closed off training.

It’s been unsurprisingly disappointing.

We’re still at a point where logic is done with the same old method of mass iterations. Training is slow and complex. genAI relies on being taught logic that already exists, not being able to thoroughly learn it’s own. There is no logic in predictive algorithms outside of the algorithm itself, and they’re very logically closed and defined.

Of course LISP machines didn’t crash the hardware market and make up 50 % of the entire economy. Other than that it’s, as Shirley Bassey put it, all just a little bit of history repeating.

People have been trying to call things “AI” for at least the last half century (with varying degrees of success). They were chomping at the bit for this before most of us here were even alive.

We are at end-stage capitalism and things other than scientific discoveries and technological engineering marvels are driving the show now. Money is made regardless of reality, and cultural shifts follow the money. Case in point: we too here are calling this “AI”.

something i keep thinking about: is the electricity and water usage actually cheaper than a human? i feel like once the vc money dries up the whole thing will be incredibly unsustainable.